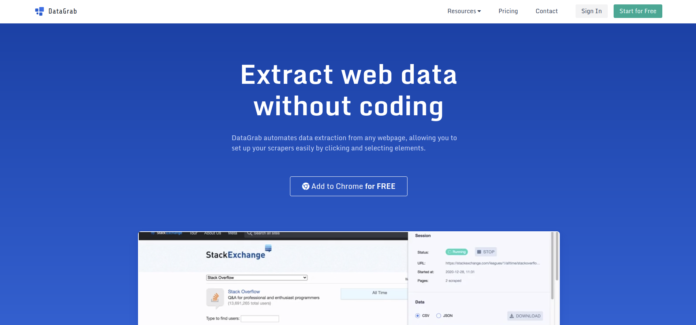

Best and most demanding DataGrab alternatives will be described in this article. A online data scraping tool called DataGrab can be used to take URLs from websites and retrieve the content they host. It can batch download data from the internet and download web pages for your local development. It is made to be quick, simple to use, and effective at caching responses. You can use it to locate their offerings and costs. You may easily export the results from DataGrab to a csv file so you can finish the job. This makes it easier for you to compare your items to those of your rivals.

Even websites that forbid you from exporting their database for present or future usage can benefit from the information you can get from them. The data is readily available and automatically gathered from every nook and cranny of the internet. DataGrab offers access to the largest database of blogs, news, video, and forums. It will provide you with the information you require if you want to download a report for a certain term or domain.

Top 15 Best DataGrab Alternatives In 2022

Top 15 Best DataGrab Alternatives are explained here.

1. No-Coding Data Scraper

This is another datagrab alternative. NoCoding Data Scraper is a straightforward web data scraper application that may collect arbitrary data from other websites for use in a variety of contexts, including SEO, affiliate marketing, and other things. Your data will be extracted in a matter of minutes with a few simple clicks. The nicest thing is that it may be used without any programming experience or understanding. The relevant data is then instantaneously scraped by the programme. Also check Erbix alternatives

The user can then download or transfer the data to Excel or other applications to edit it as needed after it has been displayed in a nicely prepared table or chart. Numerous forms of data, including text, phone numbers, email addresses, addresses, URLs, location information, Facebook and Twitter accounts, and more, can be scraped using the application. Overall, NoCoding Data Scraper is a fantastic programme that you may take into account as one of its substitutes.

2. MyDataProvider

MyDataProvider is a web scraping service for e-commerce websites that enables customers to obtain product details, costs, and reviews for any item listed on these websites in the main international marketplaces. Price comparisons between Amazon and other websites can also be obtained using the service. Any internet-capable device, such as PCs, laptops, tablets, and smartphones, can access the service.

When you request more information about a product, information for product comparisons is automatically acquired. For your convenience and future reference, all product information is saved in case you need to again request one or more of your favourite products. The service is designed for people who are time-constrained and unable to conduct the laborious task of product research. With the help of MyDataProvider, you can easily and effectively keep track of the goods you might be interested in.

3. Outscraper

Outscraper is not just a scraper; it is also a fully scalable data-mining platform that enables you to build crawlers that can automatically gather vast amounts of data. It can extract static online material from websites, including HTML pages, texts, and images. In fact, it’s so comfortable to use that you can begin scraping data from the web 30 minutes after downloading it. The ability to scrape data using regular expressions is one of the key features. This is another datagrab alternative.

This indicates that you have complete control on where and what you scrape (which specific fields, for example). With object-oriented scraping, you may reuse code fast to scrape numerous websites with the same structure. By immediately swapping out code from one extraction for another, you can also quickly alter your extraction criteria. You can alter practically every aspect of its extraction procedure, including Outscraper’s appearance.

4. ScrapeHunt

You can save and reuse data that has been scraped from any website using the web scraping database and API tool known as ScrapeHunt. Its quick, high-performance architecture enables faster extraction and simpler usage than any other service of a comparable nature. The programme will provide you with a plain-text list of URLs to scrape when you conduct a search. By offering the most complete and accurate online scraping data for use in applications, it saves save hundreds of hours of labour.

It is helpful for keeping track of mentions of specific products or brands, learning more about websites, compiling lists of product categories, and developing tools for price comparison. Both browsing the database and the ScrapeHunt API can be used to retrieve data. The API sends HTTP requests, and JSON-formatted responses are returned. Developers that register on the website can create an API Key. All API calls must contain the API Key.

5. ScraperBox

A web scraping API called ScraperBox enables developers to gather data from many sources. It speeds up data collection for developers with an intuitive interface. A file that lists the data you wish to gather makes configuration simple. It offers a practical and reliable HTTP API for a range of web scraping operations. ScraperBox can handle cookies, follow redirects, process (interactive) forms offered by HTML pages, deal with output encoding, and handle cookie management.

It has so many functions that you may use it for URL monitoring, web development, and web design to collect information from social networks or any other external website and put it on your new website. Any type of website can use the API because it is made to be user-friendly and adaptable. It is perfect for working with international data, including news articles, product pricing, and sentiment analysis because it supports different languages. It may also scrape information from the most well-known e-commerce websites, such as Amazon, eBay, and Shopify.

6. ScrapingBee

This is another datagrab alternative. To extract information from websites, ScrapingBee provides a Web Scraping API. Using this tool, you may construct data collecting or data export solutions for your company and gather information from the web. Ideal for making money on e-commerce sites, market research, business intelligence, product research, and data mining. The information gathered can be used for storage or analysis and includes website content, product details, prices, and other information. Also check Playbox Alternatives

Even though the service is geared for developers and businesses, anyone can use it. Users can include web scraping functionality into their current apps thanks to API-based architecture. Using proxies and authentication, it securely downloads data from the website. Regular Server Backups limit the use of proxies and scraping bots by storing the website data on a daily basis on a safe server. It produces the data output in the preferred objective format for you (JSON or CSV).

7. Data Miner

Data Miner is a web data extraction application that lets you parse web data, extract data from websites, and analyse the extracted web data. It extracts data from a given URL. The web scraping tool can be used by entering a URL from which you want to scrape web data, choosing the options for doing so, and the rest will be carried out automatically.

In order to manage websites that are not configured to adhere to expected standards, such as those that do not have clean URLs, place AJAX, JavaScript, and CSS in separate directories, use the same ID for several things, etc., Data Miner is developed to be quick and reliable. It can be applied right away or expanded to meet certain requirements. To extract data from a certain website, it makes use of bespoke classes that provide all required actions. Overall, Data Miner is a fantastic product that you may take into account as one of its substitutes.

8. Data Excavator

For e-commerce websites, Data Excavator is an online web data scraper that collects the data you need from any website. With this, you can quickly and easily collect data from eCommerce websites like eBay, Amazon, Rakuten, Taobao, etc. This tool can be used to effectively obtain a tonne of product information. You can use this information to gain understanding and predict trends. You can quickly scrape several web stores using the batch mode. All sizes of product photos, additional product information, titles, and variations can also be scrapped. This is another datagrab alternative.

When automating data extraction, a built-in browser eliminates the need to install additional browser plugins, which is a great way to save time. You can locate your data with fulltext search even if you are completely unaware that it exists. The tool allows you to compare any two factors, making it simple to identify the product’s largest size. Using the Image Editor, you can crop and resize images to compare various products.

9. QuickScraper

QuickScraper is a robust and user-friendly proxy API that runs in your application and easily transfers data from the Web to your database. Without having to be concerned about the tool slowing down your application while crawling, you can easily define scraping jobs and start and stop them anytime you want. By using your own servers in place of external websites, you are able to build bots or scrapers.

The key benefit is that queries will appear to originate from your own servers, preventing third-party websites from blocking your crawler. The ability to analyse and store your scraped data on your own servers is the second benefit. This crawler has the capability of carrying out planned tasks in the past or future. QuickScraper offers a straightforward interface that is simple to use and customise. Overall, QuickScraper is a fantastic tool that you may take into account as one of its substitutes.

10. ScrapingBot

With the help of the web scraping API ScrapingBot, structured data from websites can be easily extracted. By automating several repetitive procedures and correcting frequent mistakes, the tool increases your productivity. The core concept is that because to its plain and simple Python API, you can get started using this with just a few lines of code. You may schedule web scrapes using ScrapingBot directly from the command line.

This is another datagrab alternative. You can either let us handle the difficult work for you or integrate it with your own application. It offers a straightforward approach to describe typical jobs and workflows, making it very simple to maintain data consistency even when many individuals are working on the same code base. Dynamic crawling and extractions, scheduling extractions to happen on a recurrent basis, and PDF reports with extraction results and logs are further capabilities. At the moment, it supports XPath, CSS, and Regex queries on HTML pages.

11. Scraper

Scraper is a browser extension that enables users to extract data from websites where doing so just by viewing the page is not possible. It gathers structured data by scraping content from websites and uploads it to your database or Excel file. With Scraper, you may collect information from websites that require a login or password, including blogs, images, web pages, emails with attachments, products in online stores, and data.

12. Outwit Hub

Outwit Hub is an intuitive web data extraction tool that makes it simple to import web data into Microsoft Excel or Access databases. Businesses that need to collect, store, and analyse web data should use this robust tool. Perfect for extracting CRM data, Google Analytics, online polls, pop-up surveys, web scraping, obtaining corporate email addresses, and more. Also check Link Building tool

The data you require can be easily gathered, arranged, and delivered to you in a completely configurable format that matches your workflow thanks to Outwit Hub. Additionally, you may rapidly upload data like as social network accounts, phone numbers, and email addresses to your CRM. Using your website log-in to retrieve website data can save you days of manual data entry. Our incredibly effective web crawler, which only downloads the stuff it is interested in, makes it operate quickly.

13. Dexi.io

A cloud-based data processing and scraping application called Dexi.io enables business owners to make more use of their data without having to write any code or spend time delivering reports. Data extraction from many sources is always necessary, regardless of the business you are in. With the accuracy of a full-text search, particular information may be extracted from numerous sources and huge datasets. This is another datagrab alternative.

At its highest capacity, it can handle 150 requests per minute and crawl up to 400 GB of data. Track products, costs, and accessibility according to location. Analyze the density of competitors to find chances for regional growth. The sophisticated web scraping robots mimic human browsing behaviour to extract game-changing information from any website. Data deliverables that are normalised, validated, and system-neutral are designed with simple ingest into any data environment in mind.

14. AutoHotkey

With C++ programming, AutoHotkey is a versatile and open-source platform for bespoke scripting languages intended for Microsoft Windows. The software offers quick macro building as well as simple hotkeys and shortcuts for you. Windows apps that allow repetitive operations to be automated are being made more efficient via AutoHotkey. You must define the hotkeys for the keyboard and mouse, and you can remap the button or use autocorrect. Creating hotkeys has never been so easy and quick that it takes no time.

This is another datagrab alternative. The software enables you to effortlessly develop complex scripts for many types of tasks, including form fillers, macros, and auto-clicking. With a complete scripting language for tiny projects and rapid prototyping, AutoHotkey is forcing developers to wager at their comfort level. When it comes to operational duties that need to be automated, the software excels. It is compact and functional right out of the box. Additionally, your plain syntax sets the tone for concentrating on the task at hand rather than being overly technical.

15. Sikuli

Sikuli is a clever, user-friendly, open-source test automation tool that enables you to automate everything you require that is displayed on the screens. Utilizing GUI controls and image recognition, the software recognises the objects. With Sikuli scripts, you can use screenshots to automate GUI interaction, and the project can keep images of all the site elements. This is another datagrab alternative.

You have a user-friendly Sikuli-Scripter that can work easily with a selenium web driver and is useful for automating flash objects. Sikuli’s basic API makes writing incredibly simple and can automate Flash games and Adobe players. For your automated Windows process, greatest engagement with the image, attractive visual match, testing tools, and many more, there are numerous advantages.